JAKARTA In line with massively increasing the number of users, Bluesky announced an update on the moderation system on its platform. This update focuses on a friendly environmental space.

Bluesky mengatasi bahwa pembaruan ini tidak mengubah aturan yang sudah berlaku. Mereka hanya membuat aturannya menjadi lebih sederhana agar pelanggaran lebih mudah dilacak. Selain itu akan membuat sistem moderasinya lebih konsisten.

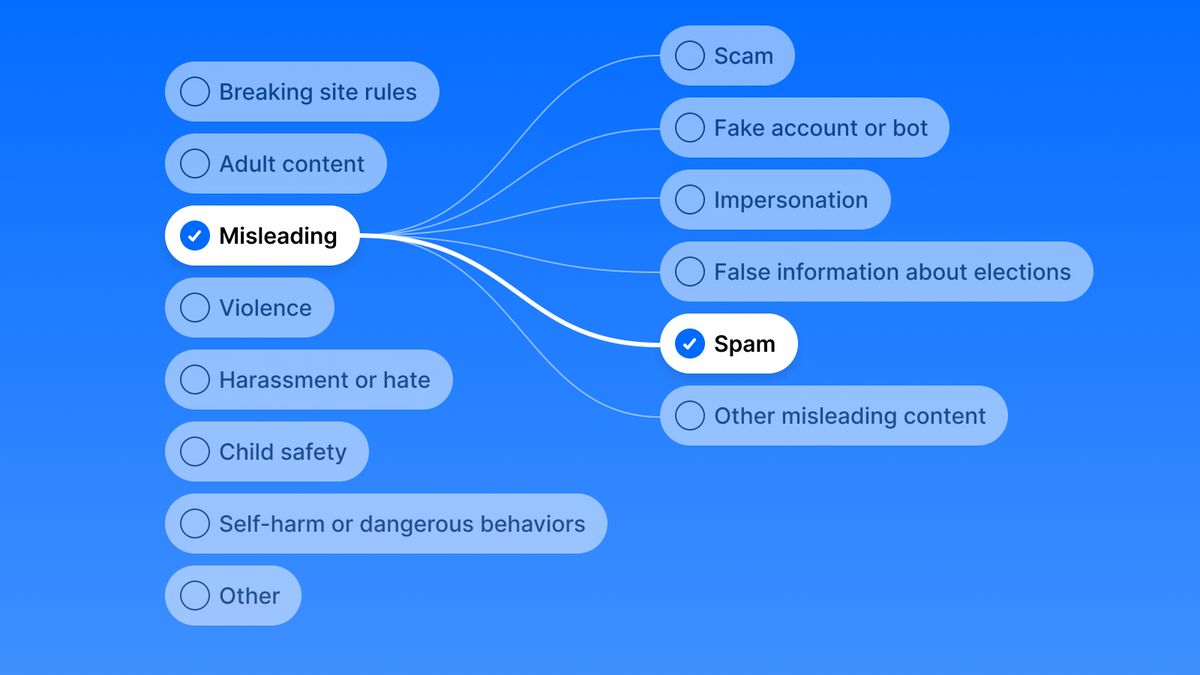

Now, Bluesky is expanding its post-incident reporting options from 6 to 39 categories. This more specific-made category provides a more precise way for users to flag the problem and help moderators act quickly and accurately.

Bluesky says that this upgraded reporting option is needed to protect users from specific dangers on social media. For example, users can now report immediate harassment or bullying of Youth and Eating Disorder content.

The platform also introduces a new category, namely Human Traffic content to comply with global law compliance, one of which is the UK's Online Security Act. Bluesky also introduces severity based on potential harm.

SEE ALSO:

This rate includes Low Risks that prioritize education to Critical Risks that could result in an immediate permanent ban. Bluesky stated that, "This system is designed to strengthen transparency, proportionality, and Bluesky accountability."

Bluesky will also be more assertive to accounts that violate their rules. Repeated violations will increase consequences, ranging from alerting and removing content to permanently blocking.

When giving law enforcement action, Bluesky promised to provide users with more detailed information. They will clarify what policies are violated, severity, and other details.

The English, Chinese, Japanese, Arabic, and French versions are automatically generated by the AI. So there may still be inaccuracies in translating, please always see Indonesian as our main language. (system supported by DigitalSiber.id)